Articles tagged: ai-security

13 articles found

AI Browser Extensions Pose New Security Risks

Emerging threats in AI and browser extensions introduce new security risks, including shadow AI and unguarded extension vulnerabilities. Organizations must be aware of these threats and consider strategies to mitigate their impact. Stolen credentials can turn authentication systems into an attack surface.

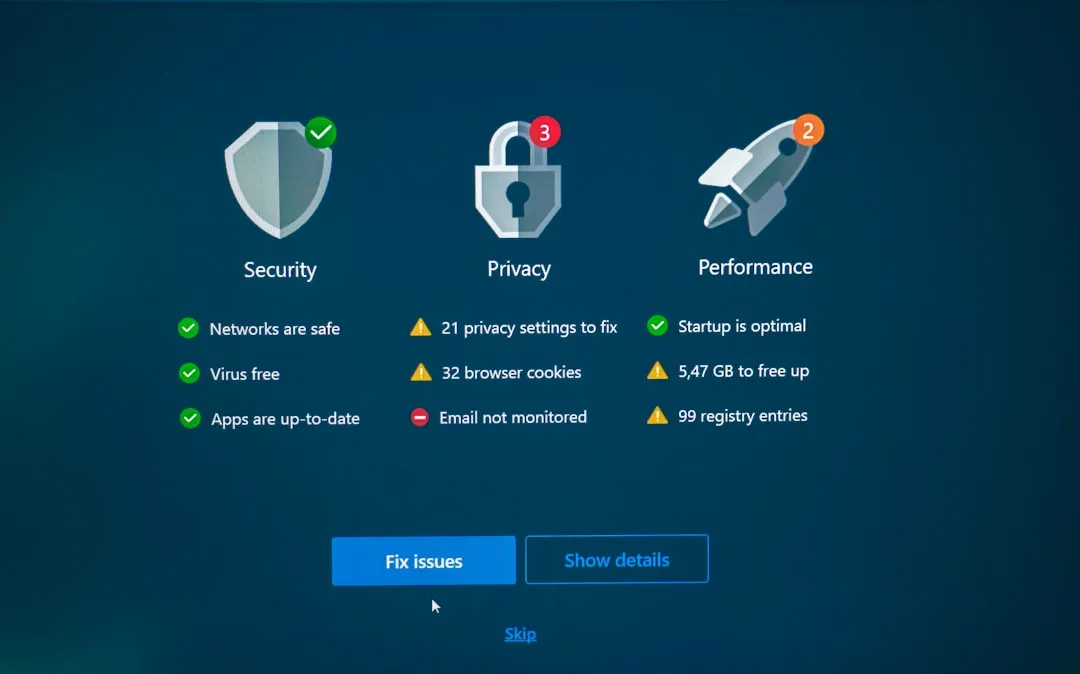

AI Security Risks Exposed

Recent attacks on Apple Intelligence and Grafana highlight the growing concern of AI-related security risks. Enterprises are deploying AI without fully understanding the risks, including model collapse and adversarial abuse. Learn how to secure your AI-powered systems.

Ransomware & AI Threats Escalate

Hospitals face severe consequences from ransomware attacks, while Google's Vertex AI poses a security risk due to over-privileged agents. Attackers are increasingly using trusted tools against organizations, highlighting the need for vigilance and rehearsals in defense.

Google's Quantum-Safe Deadline Looms Amid Rising Threats

As Google sets a 2029 deadline for quantum-safe cryptography migration, OpenAI launches a bug bounty program to identify abuse and safety risks in its AI systems. Meanwhile, emerging threats from compromised IP cameras, AitM phishing pages, and malicious VS Code extensions pose significant security concerns.

High-Severity Exploits and Cybercrime Takedowns

Multiple high-severity exploits have been discovered, including an Aquasecurity Trivy vulnerability. Meanwhile, cybercrime forums are being taken down and underground markets are selling paid AI accounts. Learn about the latest threats and how to protect yourself.

AI Assistants Redefine Security Risks

The increasing use of AI assistants among developers and IT workers introduces new security risks and challenges. As AI assistants become more widespread, security professionals must reassess their security priorities and consider the potential risks associated with these tools. This article explores the implications of AI assistants on organizational security and provides recommendations for mitigating these risks.

New Surveillance Threats Emerge

Researchers uncover methods to track cars via tire sensors, while Microsoft warns of OAuth redirect abuse and a new attack hijacks OpenClaw instances. These emerging threats highlight the need for increased security measures.

Ransomware Hits Sensitive Targets Amid AI Security Concerns

A recent ransomware attack on the University of Hawai'i Cancer Center highlights the importance of protecting sensitive data. Meanwhile, the increasing use of AI in development poses new security challenges. Learn about these threats and how to mitigate them.

GitHub Copilot and OpenClaw Under Attack

High-severity vulnerabilities in GitHub Copilot and OpenClaw pose significant risks to users. Learn about the threats and how to protect yourself.

Zero-Day AI Threats and Cloud Security Updates

Critical zero-day vulnerabilities in AI systems pose significant threats, while cloud security enhancements offer new protections. Learn about the latest developments and how to stay secure.

AI and Crypto Under Siege

Critical vulnerabilities in OpenClaw AI and cryptocurrency wallets have led to significant financial losses, while notorious threat actors like Kimwolf continue to wreak havoc. Stay informed on the latest threats and learn how to protect yourself.

RoguePilot & SANDWORM_MODE Threats Uncovered

High-severity vulnerabilities in GitHub Codespaces and npm packages have been discovered, posing significant risks to developers and the software supply chain. The RoguePilot flaw and SANDWORM_MODE campaign highlight the need for vigilance in AI-driven development tools and open-source dependencies.

AI-Related Security Threats Escalate

Recent discoveries highlight the growing concern of AI-related security threats, including vulnerabilities in GitHub Codespaces and industrial-scale campaigns by Chinese AI firms to extract capabilities from models like Claude. These threats pose significant risks to repository security and model integrity.