Introduction

A recent ransomware attack on the University of Hawai'i Cancer Center has exposed sensitive data from a 1993 study, highlighting the importance of protecting sensitive information and the significant risks posed by ransomware attacks on high-value targets. This incident underscores the need for robust security measures in healthcare organizations, particularly in preventing the exposure of personal and confidential information. The increasing use of AI in development also introduces new security challenges, including vulnerabilities in machine learning models and data poisoning attacks, which must be addressed to prevent cyber threats. Protecting sensitive data and ensuring the secure development of AI-driven applications are critical to mitigating these risks.

The University of Hawai'i Cancer Center breach involved data from a Multiethnic Cohort (MEC) Study established in 1993, which used driver’s license numbers and voter registration records to recruit participants according to The Record. This incident demonstrates the need for healthcare organizations to prioritize the protection of sensitive data and implement robust security measures to prevent ransomware attacks. As AI becomes more prevalent in development, it is essential that application developers and security teams collaborate to integrate security into the development process.

The breach also highlights the importance of protecting legacy systems, which can be vulnerable to exploitation due to outdated software or lack of proper security protocols. In this case, attackers gained access to sensitive data from a 1993 study, demonstrating the need for ensuring all systems are properly secured, regardless of age.

Ransomware Attacks on Sensitive Targets

The University of Hawai'i Cancer Center has confirmed a data leak following a ransomware attack, with part of the breach traced back to a 1993 study according to The Record. This attack highlights the importance of protecting sensitive data and the need for robust security measures in healthcare organizations. Ransomware attacks on sensitive targets can have significant consequences, including the exposure of personal and confidential information.

The breach involved data from a Multiethnic Cohort (MEC) Study established in 1993, which used driver’s license numbers and voter registration records to recruit participants. This incident demonstrates the need for healthcare organizations to prioritize the protection of sensitive data and implement robust security measures to prevent ransomware attacks. Outdated systems and lack of proper security protocols can make these organizations vulnerable to such attacks.

In addition to technical vulnerabilities, ransomware attacks often rely on social engineering tactics to gain initial access to a system. This can include phishing emails or malicious communications that trick users into divulging sensitive information or clicking on links that install malware. To mitigate this risk, organizations should prioritize employee training on cybersecurity best practices, including identifying and avoiding suspicious emails and other types of social engineering attacks.

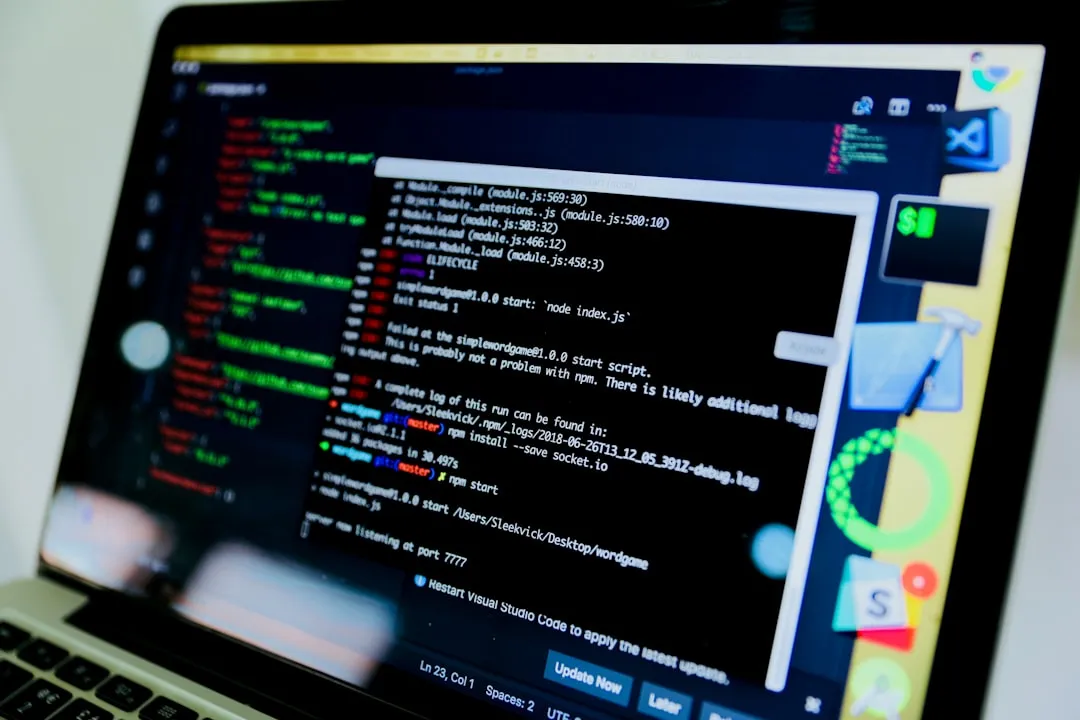

Security Challenges in AI-Driven Development

The increasing use of AI and automation in development is creating new security challenges, including the need to balance speed and security according to Dark Reading. Application developers and security teams must work together to ensure that security is integrated into the development process. AI-driven development introduces new attack surfaces, such as vulnerabilities in machine learning models and data poisoning attacks.

One key challenge in securing AI-driven applications is ensuring the integrity of training data used to develop models. Compromised or biased training data can lead to inaccurate or insecure models that attackers can exploit. To mitigate this risk, organizations should prioritize using secure and diverse training data sources, as well as implement robust testing and validation procedures to ensure model accuracy and security.

Another challenge in securing AI-driven applications is ensuring the security of the deployment environment. This includes securing infrastructure and platforms used to deploy models, including the use of secure protocols for communication and data storage. Organizations should prioritize using containerization and other forms of isolation to prevent lateral movement in case of a breach.

Mitigation Strategies

To protect sensitive data and prevent ransomware attacks, organizations should implement the following mitigation strategies:

- Regularly update systems and software to prevent exploitation of known vulnerabilities.

- Implement proper backup and disaster recovery procedures to ensure business continuity in case of an attack.

- Conduct regular security audits and risk assessments to identify potential vulnerabilities.

- Provide employee training on cybersecurity best practices, including identifying and avoiding suspicious emails and other types of social engineering attacks.

- Implement robust access controls, including multi-factor authentication and role-based access control.

- Use encryption to protect sensitive data both in transit and at rest.

In addition to these technical measures, organizations should develop a comprehensive incident response plan that outlines procedures for responding to a ransomware attack. This plan should include procedures for containment, eradication, recovery, and post-incident activities, as well as establish clear lines of communication and authority.

To ensure the secure development of AI-driven applications, development teams should:

- Implement secure coding practices and code reviews to identify potential vulnerabilities.

- Conduct regular security testing and vulnerability assessments to identify potential weaknesses.

- Use industry guidelines and best practices for secure AI-driven development.

- Foster a culture of collaboration between development and security teams to ensure that security is integrated into the development process.

- Prioritize using secure and diverse training data sources, as well as implement robust testing and validation procedures to ensure model accuracy and security.

Recommendations and Takeaways

To protect sensitive data, prevent ransomware attacks, and ensure the secure development of AI-driven applications, organizations should prioritize implementing robust security measures, including regular updates, backups, and security audits. They should also provide employee training on cybersecurity best practices and implement robust access controls, including multi-factor authentication and role-based access control.

Organizations should develop a comprehensive incident response plan that outlines procedures for responding to a ransomware attack. This plan should include procedures for containment, eradication, recovery, and post-incident activities, as well as establish clear lines of communication and authority.

Additional best practices for securing AI-driven applications include:

- Using explainable AI techniques to provide transparency into model decision-making.

- Implementing robust monitoring and logging procedures to detect potential security incidents.

- Prioritizing the use of open-source and community-supported libraries and frameworks to reduce the risk of vulnerabilities.

- Establishing clear guidelines and standards for secure AI-driven development, including using secure coding practices and code reviews.

By prioritizing cybersecurity and taking a proactive approach to securing AI-driven applications, organizations can ensure the integrity and confidentiality of sensitive data, while also preventing potential security incidents.